F2IDiff: Feature-to-Image Diffusion for Smartphone Super-Resolution

Overview

F2IDiff is a real-world image super-resolution project focused on smartphone camera pipelines at Samsung Research America. The core idea is to replace loose text-only conditioning in diffusion models with richer visual features, specifically DINOv2 features, so that the model can recover details while staying faithful to the captured scene. This is especially important for flagship smartphone cameras, where the low-resolution input already contains strong structure and only needs controlled enhancement rather than aggressive hallucination.

Problem Setting

Most diffusion-based super-resolution systems inherit priors from large public text-to-image models. Those models are powerful, but in camera applications they often introduce texture that looks plausible without being true to the scene. This creates a mismatch between academic restoration benchmarks and production imaging requirements. In practical digital zoom and camera enhancement, preserving geometry, texture consistency, and user trust matters more than producing heavily stylized details.

Team Contribution

At Samsung Research America, we work on foundation-model-based single-image super-resolution systems for smartphone cameras. Our effort includes curating and organizing large-scale image, text, and feature datasets, designing DINOv2-conditioned training assets, and helping transition from text-only conditioning toward more faithful feature-driven pipelines. We also explore flow matching and model condensation strategies so the resulting systems are more realistic for mobile deployment.

Technical Approach

The project trains a Feature-to-Image Diffusion foundation model using DINOv2 features as the conditioning signal instead of text captions. This improves patch-level specificity, which is critical for high-resolution camera images that need to be processed in tiles. The approach also demonstrates that a carefully designed and curated training pipeline can outperform larger public baselines with a substantially smaller U-Net and a much smaller training corpus. That makes the work relevant not only for fidelity, but also for efficiency and practical system design.

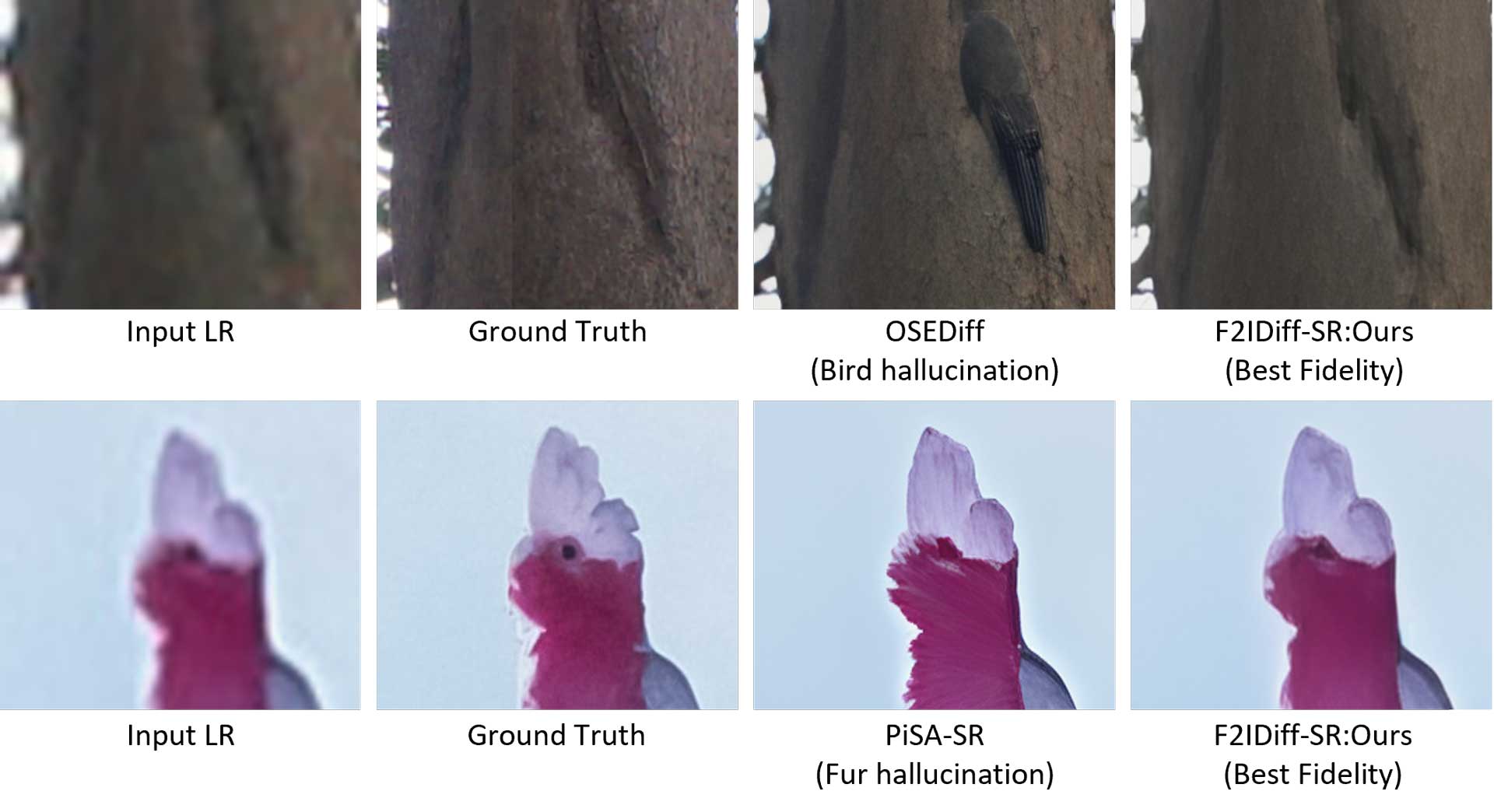

Results and Impact

The resulting model achieves stronger fidelity and less hallucination than text-conditioned baselines on real-world super-resolution tasks. It supports the broader thesis that smartphone imaging needs feature-aware and deployment-conscious generative models rather than direct reuse of generic text-to-image priors. This work also forms the basis of the F2IDiff paper, currently available as an arXiv preprint, and reflects our recent direction in camera-centric generative vision.