My Journey to Computational Photography

Exploring the intersection of optics and AI-based image processing, from DIY pinhole cameras to astrophotography and cutting-edge research

Senior Research Scientist, Samsung Research America

PhD, Computer Engineering, Arizona State University

I build foundation-model-based imaging systems for smartphone cameras, with particular emphasis on real-world single-image super-resolution, hallucination control, feature-conditioned diffusion, and efficient deployment-aware restoration.

At Samsung Research America, I work on diffusion-based and feature-driven models for camera quality enhancement within the Mobile Processor Innovation Lab, including work such as F2IDiff. My doctoral research at Arizona State University focused on atmospheric turbulence mitigation, long-range imaging, and physically grounded simulation, including projects such as DAATSim.

Career

Senior Research Scientist (Computer Vision)

Developing foundation-model-based imaging systems for Samsung smartphone cameras with emphasis on faithful, mobile-friendly super-resolution.

Summer Internship

Applied computer vision and deep learning to degraded long-range video across ground, aerial, handheld, and satellite platforms.

Research Assistant

Research in computational imaging, atmospheric turbulence restoration, astrophotography, and perceptual quality under real-world degradation.

What I Do

Building robust vision systems for degraded, long-range, and real-world imaging scenarios.

Designing and training deep architectures grounded in physics and domain priors.

Advancing camera pipelines from RAW processing to super-resolution and astrophotography.

Applying AI to biomedical sensing, environmental monitoring, and long-range observation.

End-to-end research from novel algorithm design through publication and deployment.

Scaling computation with GPU acceleration, parallelism, and cloud infrastructure.

Research & Projects

Tools & Technologies

Research Output

arXiv 2025

Devendra K. Jangid, Ripon Kumar Saha, Dilshan Godaliyadda, Jing Li, Seok-Jun Lee, and Hamid R. Sheikh

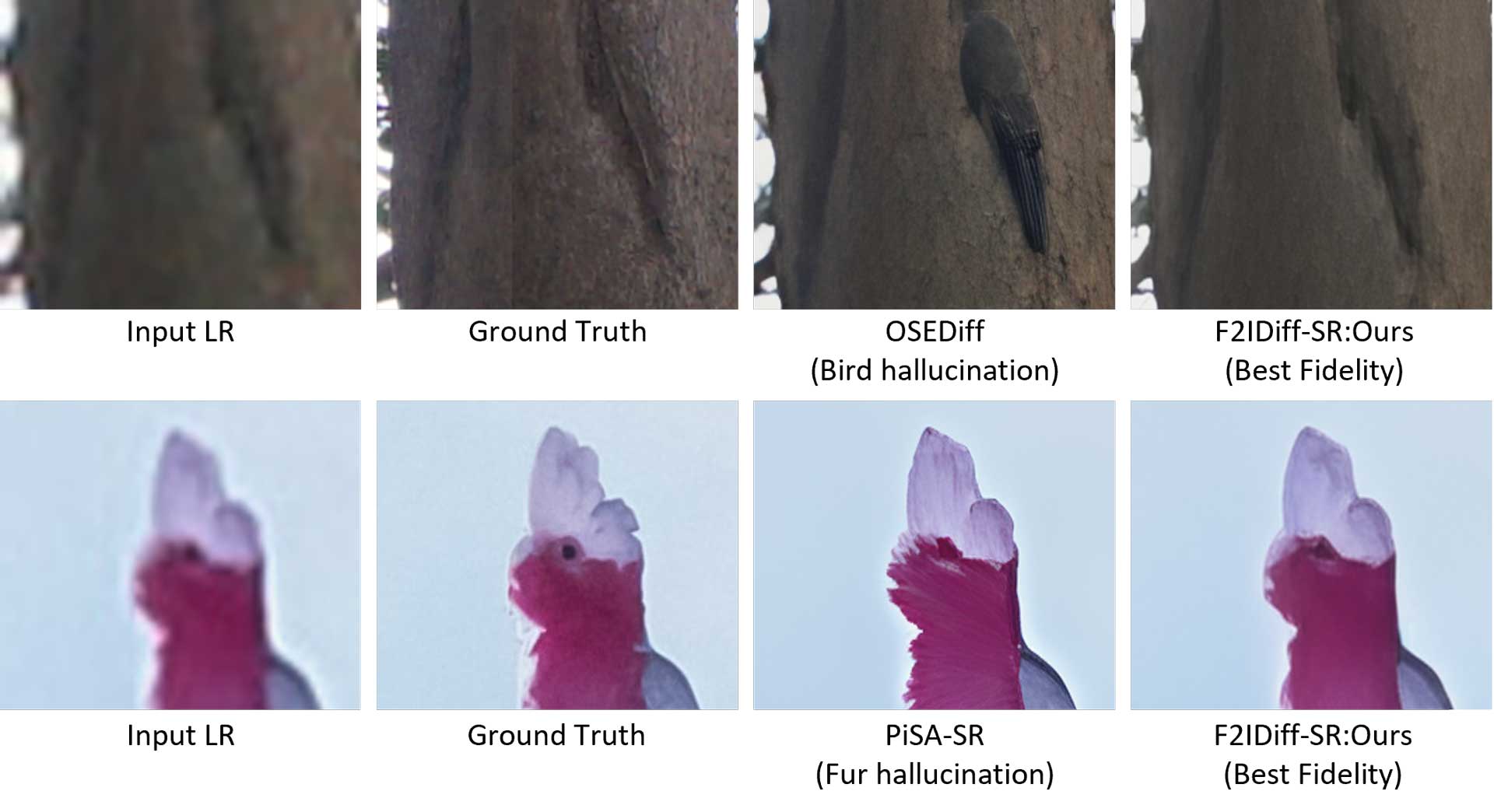

Feature-conditioned diffusion for high-fidelity smartphone super-resolution with stronger control and reduced hallucination.

Pacific Graphics 2025

Ripon Kumar Saha, Yufan Zhang, Jinwei Ye, and Suren Jayasuriya

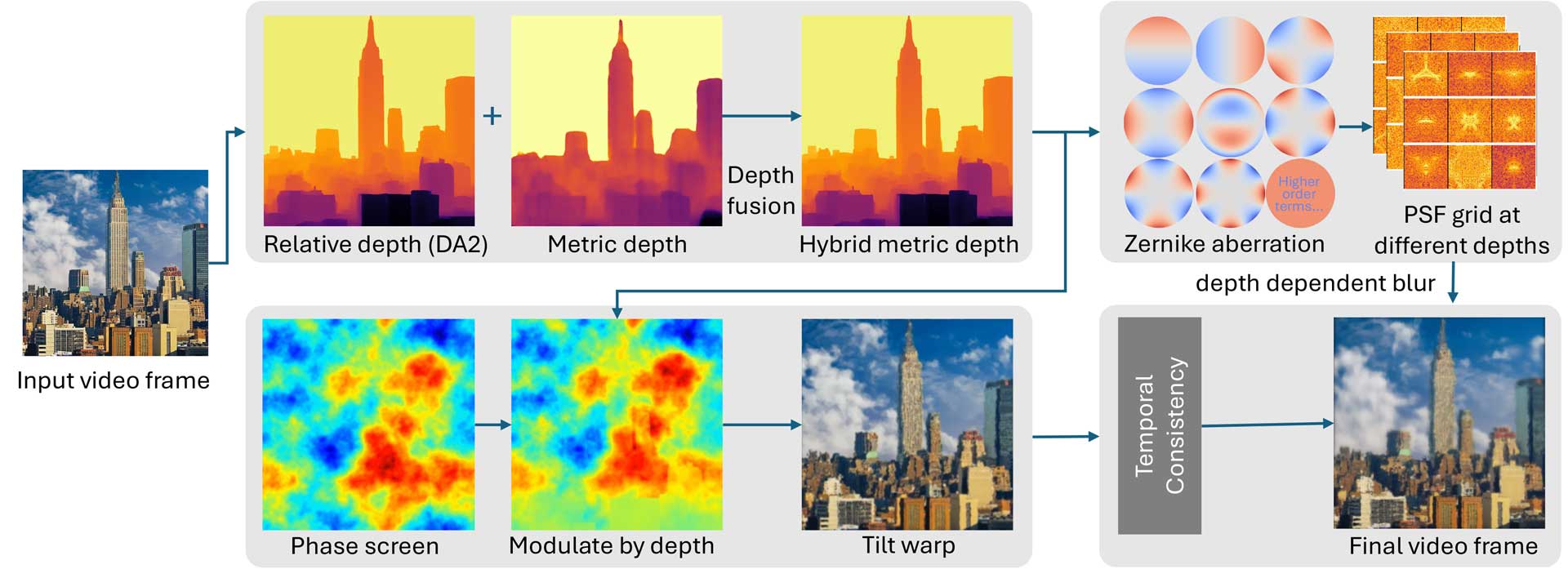

A depth-aware, physically grounded simulator for spatially varying atmospheric turbulence and temporally coherent rendering.

CVPR 2024

Ripon Kumar Saha, Qin D., Ye J., Li N., and Jayasuriya S.

A dynamic-scene turbulence restoration pipeline that couples segmentation with restoration to preserve motion and detail.

WACV 2025

Ripon Kumar Saha, Mccloskey S, and Jayasuriya S

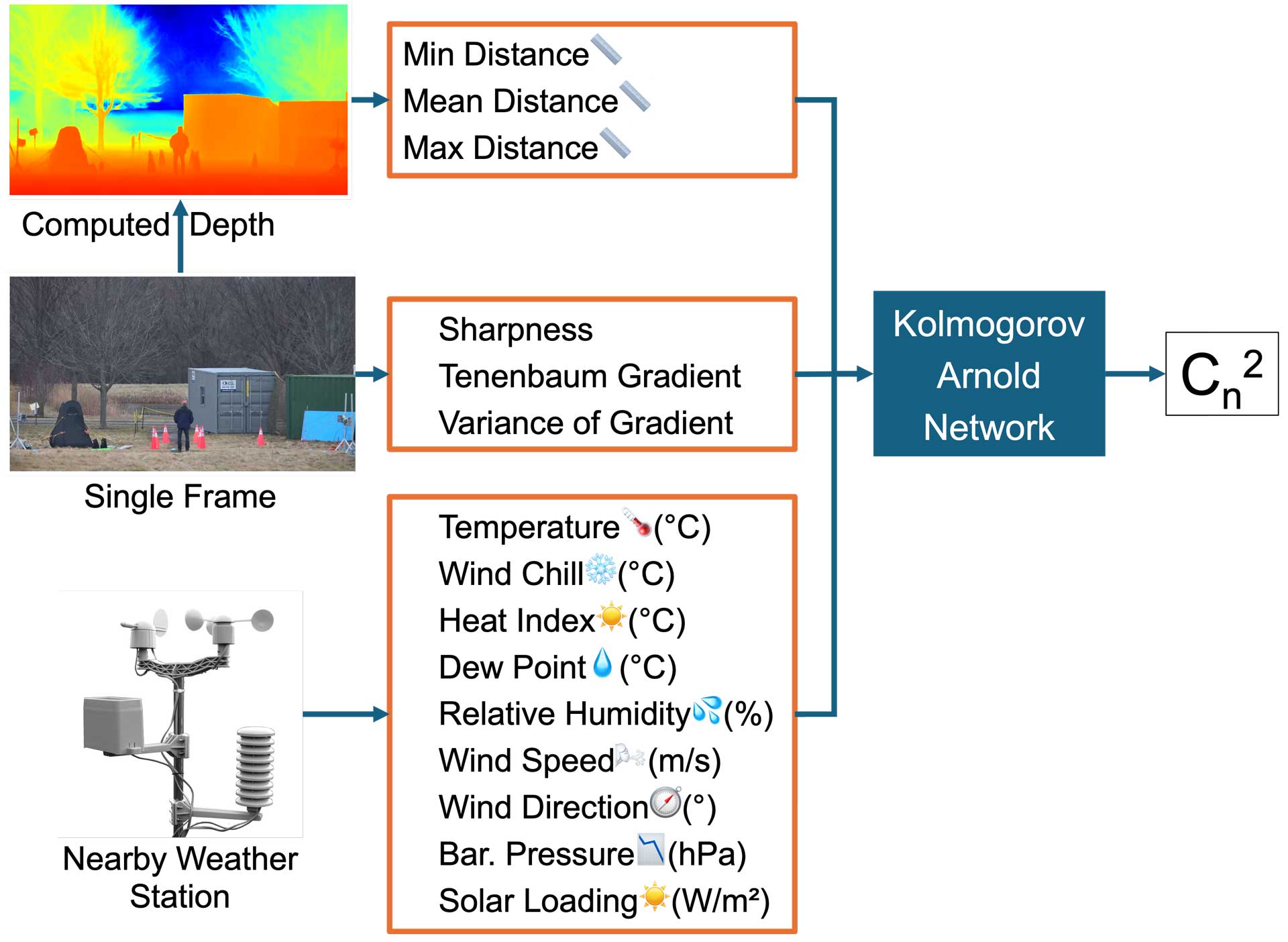

A multimodal framework that combines meteorological and visual cues to estimate atmospheric image degradation more accurately and robustly.

Thoughts & Stories

Exploring the intersection of optics and AI-based image processing, from DIY pinhole cameras to astrophotography and cutting-edge research

Our breakthrough research on AI-powered dry eye syndrome diagnosis receives coverage in one of Korea's prominent news outlets

Recognition

Arizona State University ECEE

Research Excellence Award ($12,000)

Arizona State University

Winner in Information Sciences (PhD Category)

BuildwithAI Hackathon

Out of 4,000 participants from over 70 countries